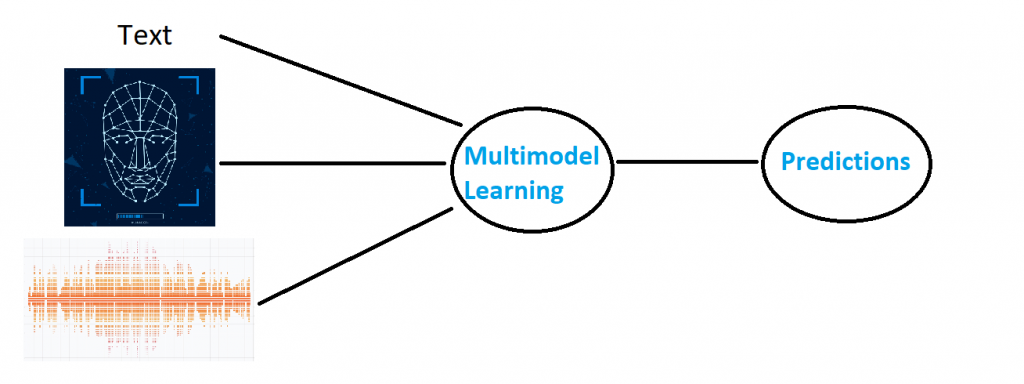

In Multimodal Learning, the focus is on developing systems that can seamlessly integrate

information from diverse data types such as text, images, and audio. By using the power

of multiple modalities, our AI models aim to enhance comprehension, enabling more

robust and versatile solutions across various applications.

a. Single-Branch Networks for Multimodal Training

In this study, we aim to address the huge volume of multimedia content on social

media by proposing single-branch networks. This approache is designed to

adeptly learn discriminative representations for both unimodal and multimodal

tasks, introducing a transformative paradigm for handling audio, images, and text

seamlessly. A pivotal aspect of our research lies in developing versatile

approaches and algorithms that empower the single-branch network to undergo

training with either single or multiple modalities, ensuring optimal performance

without compromise.

Related Publications

b Cross-Model Verification and Recognition

In this study, we introduce a challenging task focused on establishing associations

between faces and voices across various languages spoken by the same individual.

The primary objective is to address pivotal inquiries: "Will face-voice association

proves to be language independent?" and "Can a speaker be reliably recognized

regardless of the spoken language?". These inquiries hold significant importance

for comprehending the efficacy of the technology and will guide efforts to

advance the development of multilingual biometric systems. Our future

perspective aims to unravel the intricate dynamics of cross-language face-voice

associations.

Related Publications

c. Multimedia Learning Using Conceptual Graph

In this study, our focus lies in the exploration of multimedia text-to-picture

mobile learning systems based on conceptual graph matching. While multimedia

learning involves constructing mental representations from words associated with

images, the current challenge involves the labor-intensive and time-consuming

manual work required for pedagogic illustrations. Conventional systems relying

on optimal keyword and sentence selection may encounter information loss. We

aim to address these limitations by investigating innovative approaches that

leverage conceptual graph matching, paving the way for more effective and

efficient multimedia learning systems on mobile platforms.

Related Publications