Computational Imaging

January 25, 2024AI for Multimodal Learning

January 25, 2024

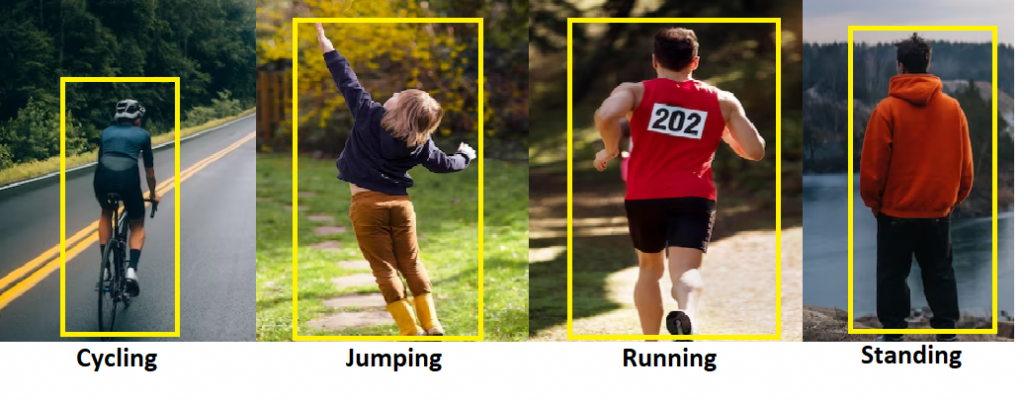

In this vertical for action recognition, we strive to develop cutting-edge technologies that

enable computers to interpret and understand human actions from visual cues. By

advancing algorithms and models, our goal is to enhance the accuracy and efficiency of

action recognition systems, paving the way for more effective security, surveillance and

human-computer interactions.

a. Cross-View Action Recognition

In this study, our primary focus is to develope techniques for 3D action

recognition that demonstrate robustness to variations in viewpoint. Our approach

involves the direct processing of point clouds, aiming to achieve cross-view

action recognition even in scenarios with unknown and unseen viewpoints. This

research seeks to enhance the reliability and versatility of 3D action recognition

systems, particularly in addressing challenges related to viewpoint variations.

Related Publications

b. Human Action Classification Using Locality-constrained Linear Coding

In this study, we focus on the development of locality-constrained linear coding

(LLC) based algorithms tailored to capture discriminative information from

human actions within spatio-temporal subsequences of videos. These algorithms

aim to enhance the efficiency of action recognition by effectively encoding

discriminative features within localized spatio-temporal contexts.

Related Publications

c. Real-Time Action Recognition

In this study, we concentrate on algorithms designed to merge discriminative

information derived from both depth images and 3D joint positions. The objective

is to achieve heightened accuracy in action recognition by leveraging the

combined strengths of these modalities. Through innovative approaches, we aim

to explore the synergies between depth information and 3D joint positions,

thereby contributing to the development of more robust and accurate action

recognition systems.

Related Publications